📄 ai-sdk/docs/ai-sdk-ui/chatbot-resume-streams

File: chatbot-resume-streams.md | Updated: 11/15/2025

Source: https://ai-sdk.dev/docs/ai-sdk-ui/chatbot-resume-streams

Menu

v5 (Latest)

AI SDK 5.x

Model Context Protocol (MCP) Tools

Copy markdown

==========================================================================================================

useChat supports resuming ongoing streams after page reloads. Use this feature to build applications with long-running generations.

Stream resumption is not compatible with abort functionality. Closing a tab or refreshing the page triggers an abort signal that will break the resumption mechanism. Do not use resume: true if you need abort functionality in your application. See troubleshooting

for more details.

Stream resumption requires persistence for messages and active streams in your application. The AI SDK provides tools to connect to storage, but you need to set up the storage yourself.

The AI SDK provides:

- A

resumeoption inuseChatthat automatically reconnects to active streams - Access to the outgoing stream through the

consumeSseStreamcallback - Automatic HTTP requests to your resume endpoints

You build:

- Storage to track which stream belongs to each chat

- Redis to store the UIMessage stream

- Two API endpoints: POST to create streams, GET to resume them

- Integration with

resumable-streamto manage Redis storage

To implement resumable streams in your chat application, you need:

- The

resumable-streampackage - Handles the publisher/subscriber mechanism for streams - A Redis instance - Stores stream data (e.g. Redis through Vercel )

- A persistence layer - Tracks which stream ID is active for each chat (e.g. database)

1. Client-side: Enable stream resumption

Use the resume option in the useChat hook to enable stream resumption. When resume is true, the hook automatically attempts to reconnect to any active stream for the chat on mount:

app/chat/[chatId]/chat.tsx

'use client';

import { useChat } from '@ai-sdk/react';import { DefaultChatTransport, type UIMessage } from 'ai';

export function Chat({ chatData, resume = false,}: { chatData: { id: string; messages: UIMessage[] }; resume?: boolean;}) { const { messages, sendMessage, status } = useChat({ id: chatData.id, messages: chatData.messages, resume, // Enable automatic stream resumption transport: new DefaultChatTransport({ // You must send the id of the chat prepareSendMessagesRequest: ({ id, messages }) => { return { body: { id, message: messages[messages.length - 1], }, }; }, }), });

return <div>{/* Your chat UI */}</div>;}

You must send the chat ID with each request (see prepareSendMessagesRequest).

When you enable resume, the useChat hook makes a GET request to /api/chat/[id]/stream on mount to check for and resume any active streams.

Let's start by creating the POST handler to create the resumable stream.

2. Create the POST handler

The POST handler creates resumable streams using the consumeSseStream callback:

app/api/chat/route.ts

import { openai } from '@ai-sdk/openai';import { readChat, saveChat } from '@util/chat-store';import { convertToModelMessages, generateId, streamText, type UIMessage,} from 'ai';import { after } from 'next/server';import { createResumableStreamContext } from 'resumable-stream';

export async function POST(req: Request) { const { message, id, }: { message: UIMessage | undefined; id: string; } = await req.json();

const chat = await readChat(id); let messages = chat.messages;

messages = [...messages, message!];

// Clear any previous active stream and save the user message saveChat({ id, messages, activeStreamId: null });

const result = streamText({ model: openai('gpt-4o-mini'), messages: convertToModelMessages(messages), });

return result.toUIMessageStreamResponse({ originalMessages: messages, generateMessageId: generateId, onFinish: ({ messages }) => { // Clear the active stream when finished saveChat({ id, messages, activeStreamId: null }); }, async consumeSseStream({ stream }) { const streamId = generateId();

// Create a resumable stream from the SSE stream const streamContext = createResumableStreamContext({ waitUntil: after }); await streamContext.createNewResumableStream(streamId, () => stream);

// Update the chat with the active stream ID saveChat({ id, activeStreamId: streamId }); }, });}

3. Implement the GET handler

Create a GET handler at /api/chat/[id]/stream that:

- Reads the chat ID from the route params

- Loads the chat data to check for an active stream

- Returns 204 (No Content) if no stream is active

- Resumes the existing stream if one is found

app/api/chat/[id]/stream/route.ts

import { readChat } from '@util/chat-store';import { UI_MESSAGE_STREAM_HEADERS } from 'ai';import { after } from 'next/server';import { createResumableStreamContext } from 'resumable-stream';

export async function GET( _: Request, { params }: { params: Promise<{ id: string }> },) { const { id } = await params;

const chat = await readChat(id);

if (chat.activeStreamId == null) { // no content response when there is no active stream return new Response(null, { status: 204 }); }

const streamContext = createResumableStreamContext({ waitUntil: after, });

return new Response( await streamContext.resumeExistingStream(chat.activeStreamId), { headers: UI_MESSAGE_STREAM_HEADERS }, );}

The after function from Next.js allows work to continue after the response has been sent. This ensures that the resumable stream persists in Redis even after the initial response is returned to the client, enabling reconnection later.

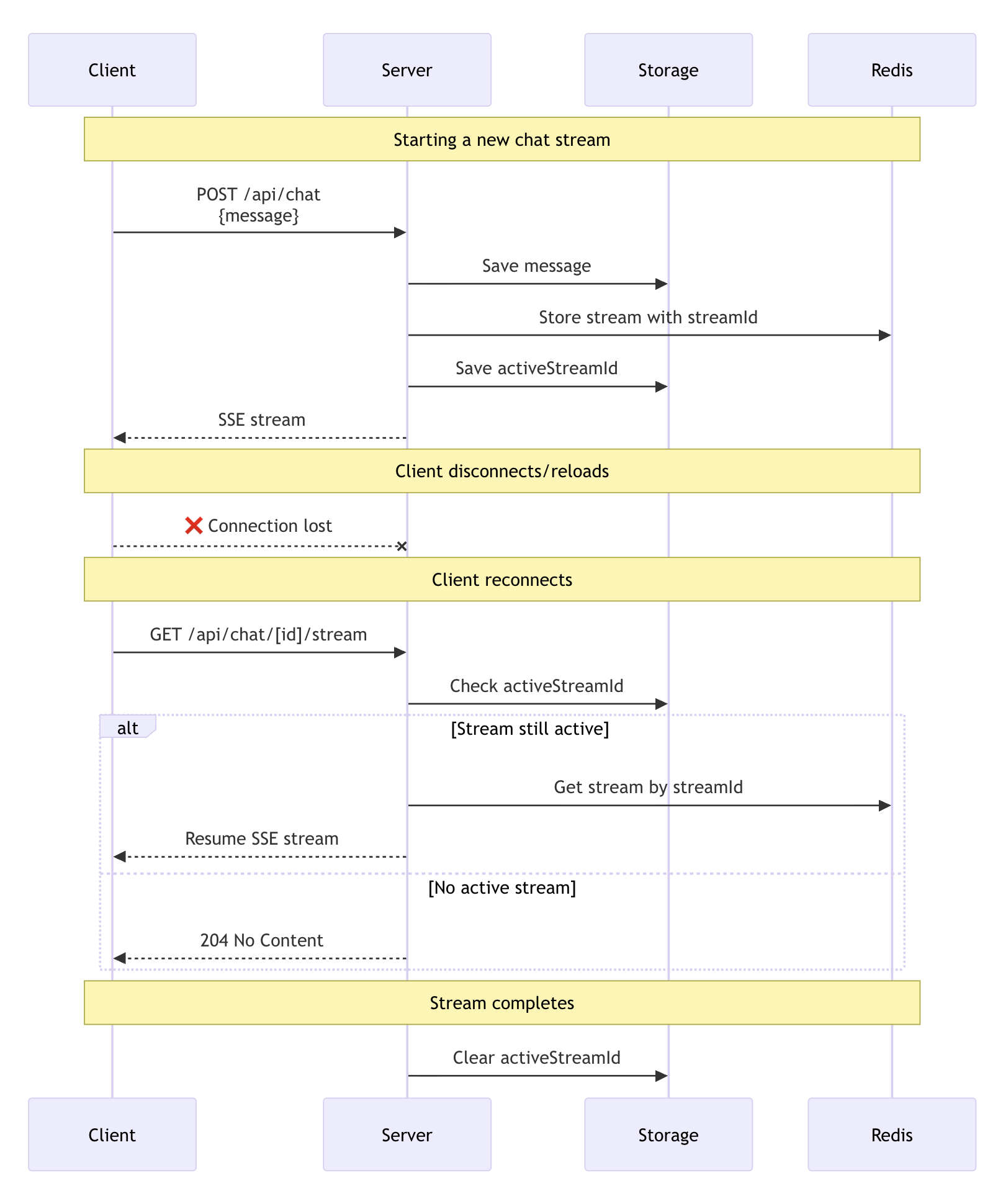

Request lifecycle

The diagram above shows the complete lifecycle of a resumable stream:

- Stream creation: When you send a new message, the POST handler uses

streamTextto generate the response. TheconsumeSseStreamcallback creates a resumable stream with a unique ID and stores it in Redis through theresumable-streampackage - Stream tracking: Your persistence layer saves the

activeStreamIdin the chat data - Client reconnection: When the client reconnects (page reload), the

resumeoption triggers a GET request to/api/chat/[id]/stream - Stream recovery: The GET handler checks for an

activeStreamIdand usesresumeExistingStreamto reconnect. If no active stream exists, it returns a 204 (No Content) response - Completion cleanup: When the stream finishes, the

onFinishcallback clears theactiveStreamIdby setting it tonull

By default, the useChat hook makes a GET request to /api/chat/[id]/stream when resuming. Customize this endpoint, credentials, and headers, using the prepareReconnectToStreamRequest option in DefaultChatTransport:

app/chat/[chatId]/chat.tsx

import { useChat } from '@ai-sdk/react';import { DefaultChatTransport } from 'ai';

export function Chat({ chatData, resume }) { const { messages, sendMessage } = useChat({ id: chatData.id, messages: chatData.messages, resume, transport: new DefaultChatTransport({ // Customize reconnect settings (optional) prepareReconnectToStreamRequest: ({ id }) => { return { api: `/api/chat/${id}/stream`, // Default pattern // Or use a different pattern: // api: `/api/streams/${id}/resume`, // api: `/api/resume-chat?id=${id}`, credentials: 'include', // Include cookies/auth headers: { Authorization: 'Bearer token', 'X-Custom-Header': 'value', }, }; }, }), });

return <div>{/* Your chat UI */}</div>;}

This lets you:

- Match your existing API route structure

- Add query parameters or custom paths

- Integrate with different backend architectures

- Incompatibility with abort: Stream resumption is not compatible with abort functionality. Closing a tab or refreshing the page triggers an abort signal that will break the resumption mechanism. Do not use

resume: trueif you need abort functionality in your application - Stream expiration: Streams in Redis expire after a set time (configurable in the

resumable-streampackage) - Multiple clients: Multiple clients can connect to the same stream simultaneously

- Error handling: When no active stream exists, the GET handler returns a 204 (No Content) status code

- Security: Ensure proper authentication and authorization for both creating and resuming streams

- Race conditions: Clear the

activeStreamIdwhen starting a new stream to prevent resuming outdated streams

On this page

1. Client-side: Enable stream resumption

Deploy and Scale AI Apps with Vercel.

Vercel delivers the infrastructure and developer experience you need to ship reliable AI-powered applications at scale.

Trusted by industry leaders:

- OpenAI

- Photoroom